Quality Control Over Time

A Historical Perspective to Overcome Today’s Dynamic Challenges

Things of quality have no fear of time.

There’s this thing that crisis does. It makes us reevaluate, think about what brought us to this point and what can we do about it. It’s an opportunity to reset, reframe, and redefine what’s possible. Crisis activates disruption which sets the stage for innovation, the next act.

Startup

The first quality control systems began with relationships. Centuries ago, artisans related themselves to each of their products using a distinctive mark. They developed skills through apprenticeships and connected through guilds working together to create and uphold quality standards.

This inspection and apprenticeship training approach to quality control (QC) lasted through the Industrial Revolution. It wasn’t until the 1920s, when physicist and statistician Walter A. Shewhart developed the first control chart, that we could use his statistical quality control (SQC) methods on a set of final product samples.

We also learned to classify origins of process variation as either naturally-occurring or assignable through Shewhart’s work. It’s one thing to identify product defects efficiently and quite another to do something about them effectively.

Management Integration

Some transformational quality work was done in the 1950s when we start seeing a management approach to QC. Engineer and mathematical physicist W. Edwards Deming added QC’s relational element by including psychology and theory-of-knowledge training. While engineer and management consultant, Joseph M. Juran, emphasized quality training for top- and middle-management, further integrating quality throughout the organization.

QC engineer Armand V. Feigenbaum also emphasized quality systems integration and the importance of quality as both an individual and team commitment in his description of Total Quality (TQ). With TQ, we begin to consider managing quality as managing the business, and that quality equals cost.

"The results of long-term relationships is better and better quality, and lower and lower costs."

Continuous Improvement

Continuous process improvement also enters the quality scene in the 1950s. Deming promoted QC as occurring in an iterative process that he called the Shewhart cycle, later known as Plan-Do-Check-Act, that continued to evolve into Six Sigma’s DMAIC model; Define, Measure, Analyze, Improve, and Control.

Standardization

The American Society for Testing and Materials (ASTM) established a committee on the Presentation of Data with Shewhart as its first chair in the 1930s. Later that decade, Harold Dodge led a development group that published the first Manual on the Presentation of Data later to include limits of uncertainty and control charting. This work laid the foundation for today’s ASTM Committee E11 on Quality and Statistics.

After WWII, Japan’s post-war miracle recovery period, Deming and Juran each took SQC and QC management teachings to Japanese companies. Organizational theorist Kaoru Ishikawa learned QC management approaches then augmented them by developing a uniquely Japanese methodology, the QC Circle Koryo.

Through the 1960s and 1970s, Ishikawa promoted the QC Circle to enable top-down, bottom-up, and process-wide quality control throughout the organization introducing Total Quality Control (TQC). He helped develop Japanese and international QC standards believing the necessity of standardization in QC. To this day, Japanese products are known for their quality.

Back in the States, good manufacturing practice (GMP) and good laboratory practice (GLP) regulations enter the scene. We are told to make our QC plan, document our plan, carry out our plan as documented, and show that we did what we said we were going to do.

"Quality must be integrated into the design and into each process."

Cost of Quality

Through the 1980s, quality management consultant Philip B. Crosby claimed that “quality is free!” His point was that a well-executed QC program more than makes up for the cost of implementation because you are more likely to “get it right the first time.”

Total Quality Management (TQM) comes into focus in the 1990s. The TQM movement also focused on prevention-related costs. It aimed to bring statistical tools and organizational methodologies together. Where TQC was company-focused, TQM was customer-focused. TQM has expanded to include so many approaches that its definition becomes allusive. In this respect, TQM fits well with GMP and GLP guidelines as they do not oblige a specific approach.

Reassessment

"Progress in modifying our concept of control has been and will be comparatively slow. In the first place, it requires the application of certain modern physical concepts; and in the second place it requires the application of statistical methods which up to the present time have been for the most part left undisturbed in the journal in which they appeared."

"Almost 30 years, that's typically the time-lapse between research and development of statistical tools until they hit the factory floor."

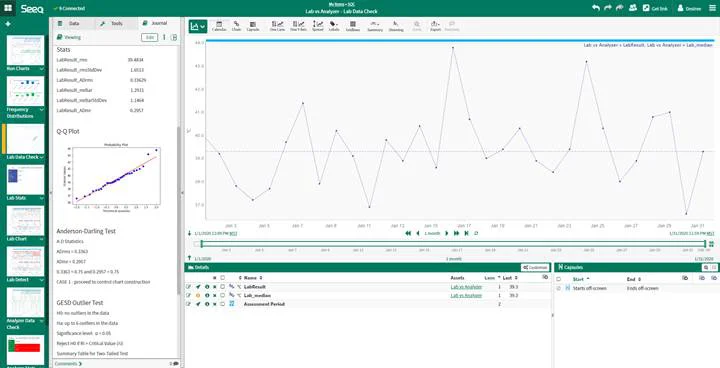

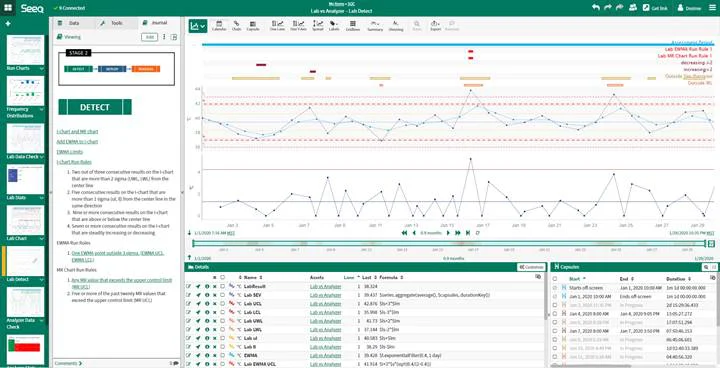

Alex T.C. Lau, a present-day leading quality professional, admits that it still takes a generation of time before known statistical methods are put into practice. We know what we need to do. We’ve defined it in our QC plans. The question remains. How? The answer is by leveraging the evolution of data analytics for process industries. We have reached the opportunity stage.

Overcoming Dynamic Challenges

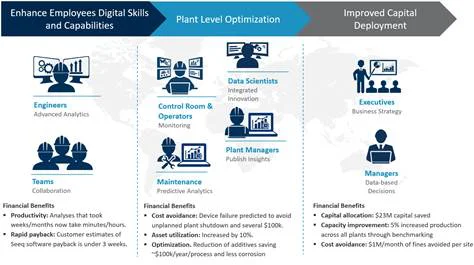

Through this quick historical review, we see QC moving from post-production to in-process, developing SQC methods, and expanding QC visibility from artisans or operators to supervisors, managers, on up to the organization’s top.

Over the last century, we’ve learned that relationship is the thread that runs through successful QC programs. Relationships require connection, and connection requires communication. If we want to communicate effectively, we need tools to connect our data with our analytics and analytics to our reporting.

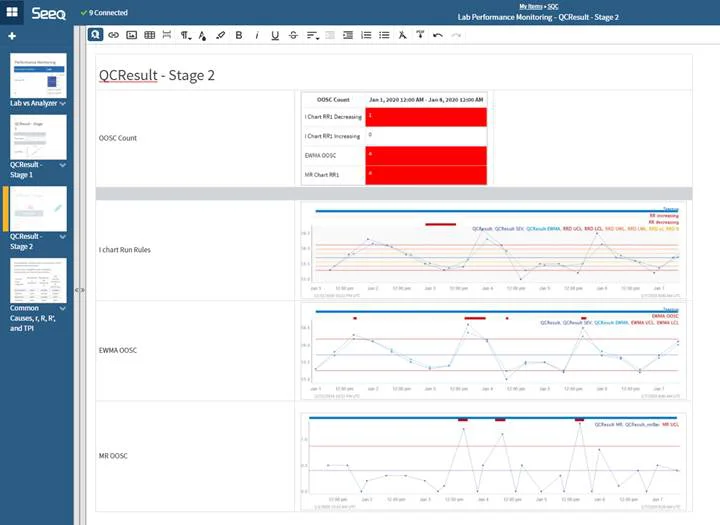

Seeq connects to your data in historians, LIMS, Lab Instruments, SQL-based data sources, and more on-premises or in the cloud. Seeq Workbench and Seeq Data Lab connect your data to your analytics. Seeq Organizer connects your analytics directly to your reporting. Seeq can help you place your QC program on the stage.

Let’s take this opportunity to step-up our quality game. We know the statistical methods. We know the organizational, communication, and relationship requirements. Now, we have the access to the data as well as the tools to perform analyses and communicate organization wide.

The next act in innovation is here. Let’s celebrate a century of learning and knowing quality control strategies by using the right tools to put these strategies in practice.